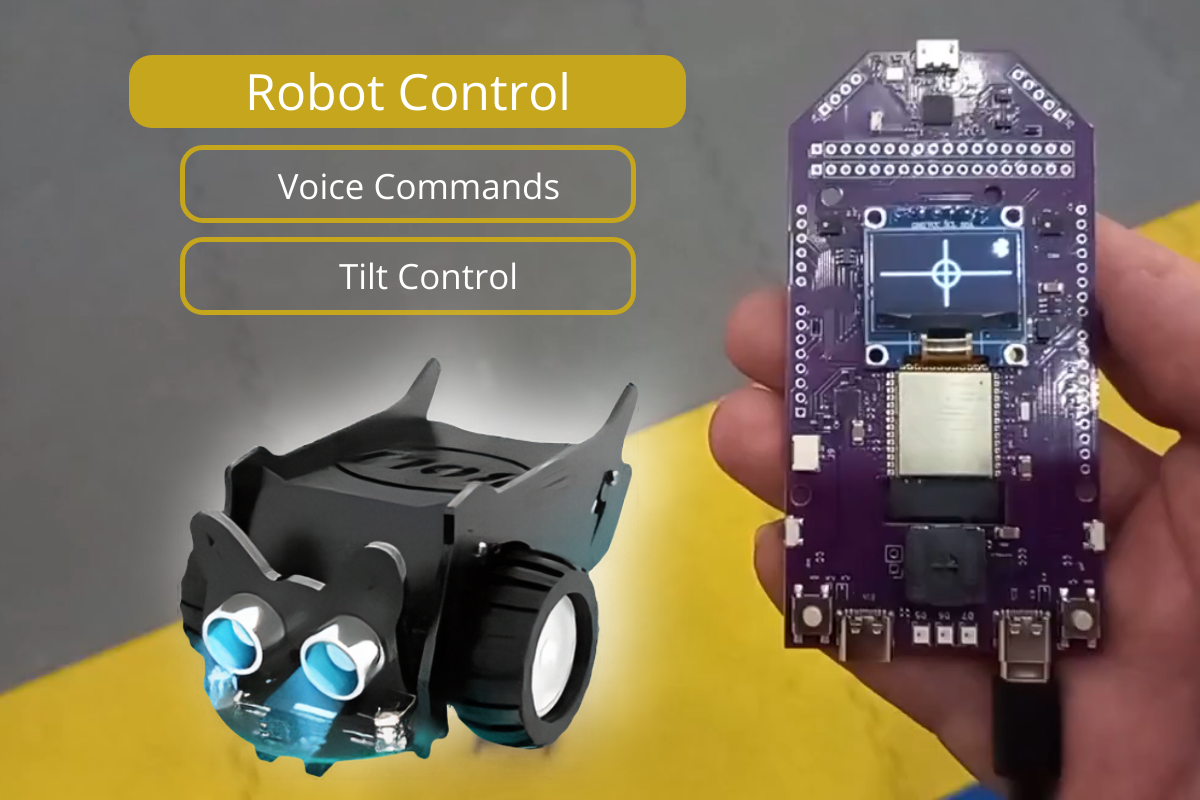

GRC AI Robot Control-Transparent Enclosure and Battry

GRC AI Robot Control-Transparent Enclosure and Battry Robot Control App + GRC DevBoard Control your devices, such as robots, using voice commands and tilting a board in different directions. Сheck out our open-source Arduino program for the CrowBot-BOLT robot, which is ready to use right out of the box! We designed it to showcase voice control and tilt-based movement. You can also use it as a reference for your projects, adapt it for other robots and devices, or explore new possibilities with the CrowBot. The app isn’t limited to CrowBot—you can use it with various robots and devices. The app provides access to output data, allows you to send commands, manage controls, and process data, making integration and customization easy for your specific tasks and equipment. Refer to the documentation for details: Open-source software component on GitHub Where can it be used? 🎁 Gifts A fun and unique device for anyone interested in Arduino, robotics, or electronics. A great way to explore cutting-edge tech and dive into new hands-on experiences. 🤖 Learning RoboticsDesigned for curious kids and their parents who want to explore the world of AI-powered technologies together. 🏫 Schools and Robotics ClubsWe offer technical support for teachers and are open to collaboration and joint events. 🛠 DIY ProjectsControl your drones, RC cars, and robots in a whole new way — with motion and voice commands. Additional free applications Voice PIN This application allows the DevBoard to function as an electronic switch that activates when a user says a pre-set voice PIN code consisting of four digits. Easily create an AI smart lock activated by voice PIN using this GRC AI DevBoard, Electromagnetic Lock, and Crowtail-Relay 2.0. It’s simple to assemble using the ready-made parts and schematics. Teacher 3+ This interactive English learning app helps kids learn through pictures, sounds, and simple math (addition and subtraction up to 20). Children say their answers aloud, and AI checks if they’re correct. If they need help, they can skip or get a hint with the word and pronunciation. Check the full description of Apps on Github. Development Board Overview Master module ESP32-S3 Communication module ESP32-C3 Connectivity USB, UART to USB interface CP2102N i-Fi 802.11 b/g/n Bluetooth LE: Bluetooth 5, Bluetooth mesh Periphery 2 MEMS microphone Accelerometer MPU-9250 4 buttons Amplifier MAX98357A Speaker FUET_FS_1340 3 RGB LEDs – SK6805 Monochrome OLED Display 0.96″ 128×64 USB-C port 5V, 500mA (min) 2 PIN socket LiPo 1S – 3.7V Power consumption (average) 30mA Check the full specification at link: GRC tinyML DevBoard Specification Links • The full description of Apps on Github • Open-source component on GitHub • GRC DevBoard Specification • Robot Control Additional Description • Open-source Arduino program for the CrowBot-BOLT robot

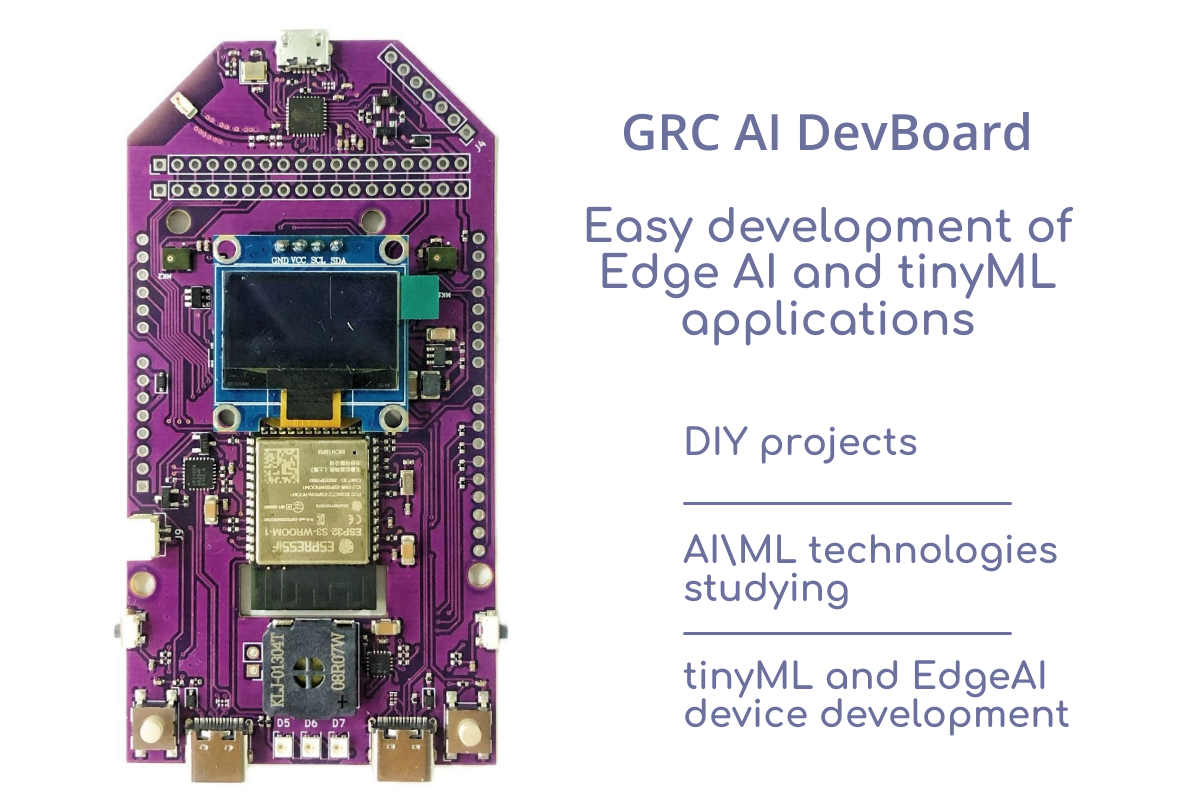

GRC AI DevBoard

GRC AI DevBoard Purpose Promptly testing ready-made, pre-configured neural networks; Checking viability of ML algorithms in solving your tasks and selecting AI functionality which will suit best your goals; Use Open-source software templates to design smart devices; Developing software, debugging and testing for a target device. Where Can It Be Used? DIY projects AIML technologies studying tinyML and EdgeAI device development Demo Applications Download software on Github: https://github.com/Grovety/grc_devboard_apps Voice Relay Voice control of devices (turn on and off). Sound Events detection Baby crying, glass, dog barking, coughing. Teacher (English) Demo version of App for English words learning. Overview Specification Master module ESP32-S3 Communication module ESP32-C3 Connectivity USB, UART to USB interface CP2102N; Wi-Fi 802.11 b/g/n; Bluetooth LE: Bluetooth 5, Bluetooth mesh Periphery 2 MEMS microphone; Accelerometer MPU-9250; 4 buttons; Amplifier MAX98357A; Speaker FUET_FS_1340; 3 RGB LEDs – SK6805; Monochrome OLED Display 0.96″ 128×64. Power supply USB-C port 5V, 500mA (min) 2 PIN socket LiPo 1S – 3.7V Power consumption (average) 30mA Check the full specification at link: https://github.com/Grovety/grc_devboard_apps/blob/main/Specification.md Links • Demo Applications on GitHub • Specification • ESP32-S3-WROOM • MP34DT06JTR Datasheet • MPU-9250 Datasheet

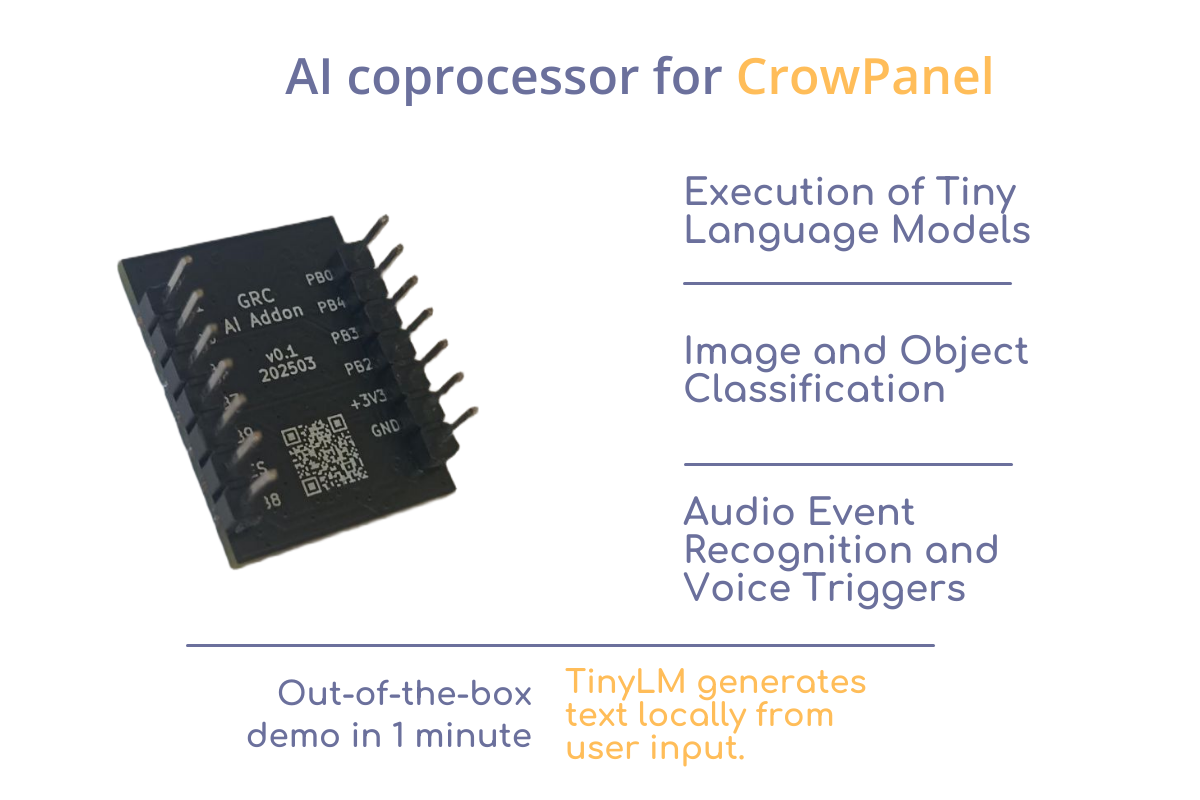

GRC AI Add-on

GRC AI Add-on for CrowPanel on HX6538 Run AI models on your CrowPanel — without cloud, complexity, or extra boards. Build interactive, intelligent devices that generate stories, classify inputs, or run TinyLM models — all offline and on-device. Just plug it in. GRC AI Add-on is a plug-in NPU module designed for CrowPanel, powered by the ultra-efficient Himax HX6538 microcontroller with Arm Cortex-M55 and Ethos-U55 NPU.It enables local neural network inference — perfect for applications like text generation, classification, and voice interfaces. Who It’s For Smart Device Developers→ Rapid prototyping and hypothesis testing with TinyML Schools, Makerspaces, Tech Hubs→ Practical demonstration of TinyLM/TinyML applications DIY Makers & AI Enthusiasts→ Build offline AI projects with Tiny Language Models (TinyStories, command assistants, etc.) Key Features Demo : TinyStories An interactive storytelling prototype based on the TinyStories Language Model. It showcases how compact hardware can be used for interactive natural language generation — all at the edge, with low power consumption and no internet required for model inference. The user types or choose a prompt on the CrowPanel touchscreen — for example, “Tell me a story about a princess.” The request is processed locally by a Tiny Language Model running on the GRC AI Add-on. Within seconds, a complete story is generated and displayed on the screen — with no internet connection or cloud services involved. To run the TinyStories demo, a CrowPanel Advance is required for input and display. Download the CrowPanel firmware here. The same link also provides the latest firmware for the Add-on and full documentation. Specs AI Core Microcontroller: Himax HX6538-A06TDFG CPU: Arm Cortex-M55 NPU: Arm Ethos-U55 SRAM: 2 MB ROM: 4 MB External Flash: 128 Mbit (16 MB) QSPI NOR Flash Form Factor & Pinout Add-on layout: 14-pin dual-row (7×2) header Pitch: 2.54 mm Board size: ~20×25 mm Power & Performance Power Supply: 3.3 V (regulated by CrowPanel) Typical Consumption: Idle: <1 mA Active inference: ~10–20 mA depending on model Designed for: battery- and solar-powered deployments Storage & Model Deployment Flash for models: 16 MB QSPI Flash Preloaded example: TinyStories (text-generation LLM) Model update: via firmware flashing or QSPI update tools Frameworks supported: CMSIS-NN, GRC SDK, TensorFlow Lite for Micro (adapted) Links https://github.com/Grovety/grc_ai_add-on

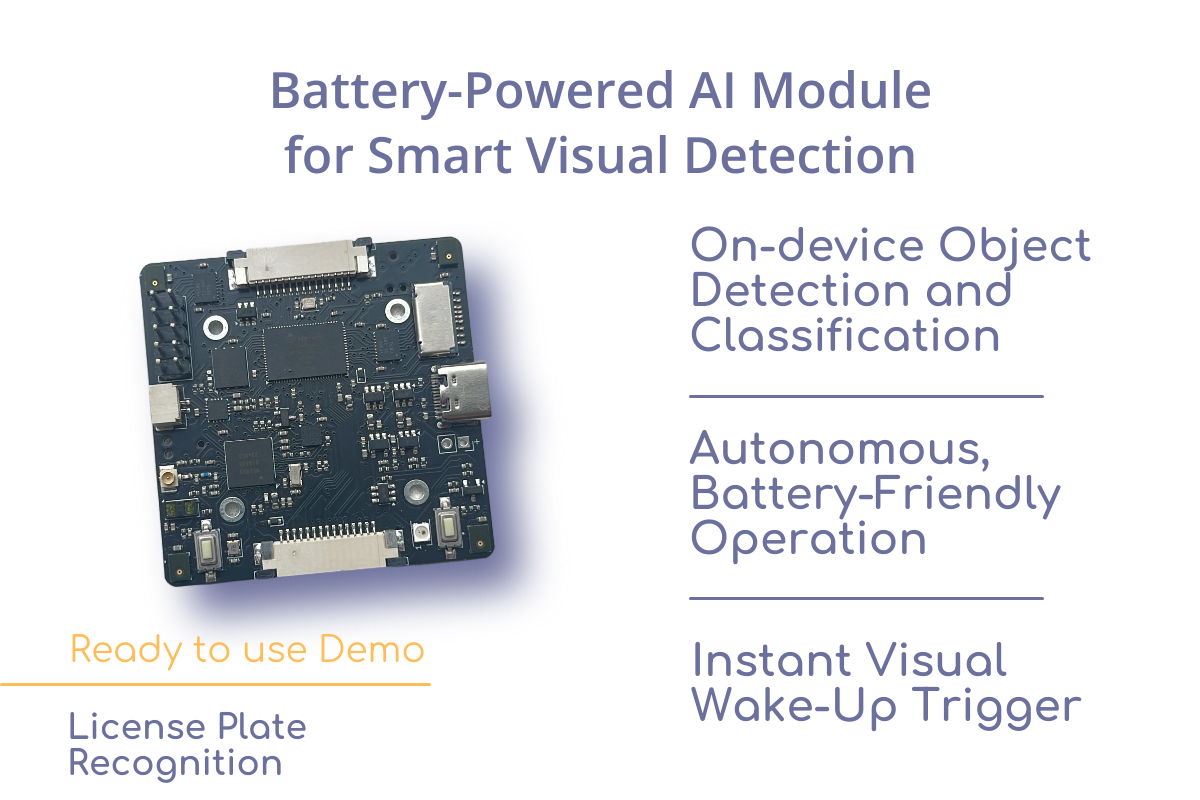

Powered Edge AI Module on Himax

Battery-Powered Edge AI Module on HX6538 Build smarter, energy-efficient devices with local AI that sees and reacts — without the cloud, without latency, and without constant power draw. Visual Wake Word: just like a smart speaker listens for a voice cue, this module “wakes up” when it sees a human, vehicle, animal or another oblect you choose. Autonomous operation, long battery life, and instant response — ideal for Smart Doorbells: Instantly detect a person at the door and trigger alerts — no cloud or Wi-Fi required. Retail People Counter: Low-power presence detection and classification to track footfall and store occupancy. Wireless Security Node: Battery-powered edge device with visual wake-up; sends notifications when a person or vehicle is detected. Access Control System: Local AI detects authorized personnel or license plates, triggering gate or door mechanisms. Smart Bird Feeder / Wildlife Monitor: Recognizes specific species and logs sightings autonomously in the field. Construction Site Monitoring: Detect unauthorized human presence or restricted zone entries in low-power mode. Ready-to-use Example Applications Gate — Standalone ANPR Module for Gate & Barrier A fully autonomous, battery-friendly module that opens your barrier or gate when it detects a recognized license plate. Wild — autonomous long-term AI camera trap Battery-powered AI camera trap for wildlife monitoring. Detects animal and bird species using onboard neural networks — no cloud or internet required. What can you do with it? With this module, you can: Run neural networks for object detection and classification (e.g., animals, vehicles, license plates); Capture and process audio and video directly on the device; Develop fully autonomous systems that run on batteries or rechargeable power; Integrate it as a drop-in component into your final product. Power Consumption and Battery Life Sleep Mode: Base current consumption: 316 µA Applies when all peripherals are disabled (deep sleep) Inference Mode (refers to detection & recognition networks): Active duration per inference: 130 ms + Himax startup time per inference 50 ms Current consumption during inference: ~30 mA Includes: activity of Himax HX6538 and Ethos-U55 NPU, including internal handling of camera and peripheral interfaces. External peripheral power consumption (camera, ToF sensor, mic, etc.) is not included. Battery Life Example: Periodic Inference (2× per minute) Assuming the device performs inference twice per minute, the average current is calculated as: Active time per minute: 0.36 s (2 × (130+50) ms) Sleep time per minute: 59.64 s Average current: With a 2500 mAh battery, expected runtime: This makes the platform ideal for long-term deployments in battery-powered applications with periodic AI inference, such as remote sensors, monitoring systems, etc.. Power Consumption Data Available When you purchase this module, we will also provide a detailed document outlining its power consumption in various operating modes — helping you accurately estimate energy usage in your final device. Fast time to market Use the module as a finished component — build your enclosure, add your model, and go to production. Open-source stack: Full firmware, Android app, and documentation available to accelerate development and customization. Ready-to-use platform: Includes camera interface, microphone array, uSD storage, ToF sensor, IMU, and Bluetooth — all pre-integrated. Free Support for Buyers Open-source projects for rapid product development An open-source object detection and classification project that you can customize for your specific use case Ready-to-Use Software Ecosystem The module comes with a complete open-source software stack, making it easy to start development and deploy real-world AI solutions without writing low-level code. Supported Frameworks and Toolchains: TensorFlow Lite Micro – for running quantized neural networks efficiently on MCUs CMSIS-NN – Arm-optimized neural network kernels Standard Arm toolchains – Compatible with Keil Studio, VSCode + PlatformIO Everything is Open-Source: Preloaded firmware, example models, and full API documentation Android demo app with source code Easy model swap (replace pre-trained network with your own) Example Projects & References: Visual Wake Word demo on Arm’s Corstone-320 MLEK:https://community.arm.com/arm-community-blogs/b/ml-ai/posts/ml-ek-vww Official Visual Wake Word dataset and benchmark:https://github.com/tensorflow/tensorflow/blob/master/tensorflow/lite/micro/examples/person_detection/README.md Himax SDK and NPU documentation:https://www.himax.com.tw/products/ai-sensors/ai-accelerators/ Free Support for Buyers When you purchase this module, you get free technical support and expert consultation to help you design and launch your own AI-powered solution. Whether you’re building a wildlife camera, smart gate, or custom embedded device — we’re here to help from PoC to production. End-to-End Development Workflow 1. Platform Evaluation You have a pre-configured dev kit (hardware + firmware + pre-trained model) Run out-of-the-box demo (e.g., animal detection) to test: Inference performance (FPS, accuracy) API integration (REST/gRPC/edge SDK) Hardware compatibility (CPU/GPU/NPU utilization) Review the API specification and model compatibility guidelines via the pre-built demo 2. Custom Model Integration Swap the default demo neural network for a task-specific model (e.g., ANPR for local license plates) via: DIY path: adapt existing models; White-glove service: Grovety delivers a custom model (optimized for target hardware). 3. Prototype to Production Modify the open-source board firmware to implement custom business logic; Enhance the mobile app with required features and UI/UX changes; Finalize the hardware: uses existing enclosure or develops custom hardware. Outcome: A ready-to-sell solution with low development costs and short time-to-market. Subsystems & Their Functions Under Himax control Image capture from camera module Audio acquisition from 4-microphone array Image processing using built-in hardware accelerators Image/audio processing using neural networks and Arm Ethos Data exchange with nRF52833 via SPI/I2C interfaces microSD card operations Under nRF52833 control Himax communication via SPI and/or I2C interfaces Host communication via Bluetooth and/or USB-UART interfaces Power management and device configuration (Himax, accelerometer, ToF sensor) Battery charging control and state monitoring LED control and button state reading Hardware Components List 1. Processing Units Main MCU: HiMax HX6538 – Handles primary tasks: Neural network acceleration (Ethos-U55 NPU) Camera interfacing (MIPI CSI-2) Microphone array processing (PDM/PCM) uSD card storage management Peripheral MCU: Nordic nRF52833 – Manages: Bluetooth 5.2 Low Energy connectivity Power management (battery/DC-in) Time-of-Flight (ToF) sensor data acquisition Inertial Measurement Unit (IMU) processing 2. Sensors & Peripherals Imaging: Camera-In (MIPI interface) – Primary vision input Camera-Out (Debug/auxiliary feed) Audio: Dual PDM microphones – Beamforming-capable Environmental: ToF sensor (VL53L5CX or equivalent) 6-axis IMU (Accel + Gyro) 3. Storage uSD card slot – For high-capacity logging (video/audio)

Improve Accuracy and Expand Battery Life for AI powered Cameras

Improve Accuracy and Expand Battery Life for AI powered Cameras Grovety selected Alif Ensemble due to the multi-core architecture with Ethos-U55 and Alif’s aiPM technology™, which allows finite control over power domains.https://www.youtube.com/watch?si=VLOXSndbf3BKtBeQ&v=4saoH3KZGb4&feature=youtu.be Grovety is an embedded AI applications company offering support in firmware, toolchains, and applications. Antony Vasilev, CO-CEO at Grovety, set out on a mission to help the Persian leopards of Romania. After visiting a local national park, Antony discovered that park rangers were struggling to track and protect the endangered Persian leopard population. The park’s wildlife cameras were capturing poor footage and had low accuracy. False positives are not only frustrating, but also drain battery life as the camera is constantly waking up unecessaarily. In this webinar, you will learn how Grovety created and deployed an AI module on Alif Ensemble that improves accuracy, reduces false positives, and expands battery life for wildlife AI cameras. Grovety selected Alif Ensemble due to the multi-core architecture with Ethos-U55 and Alif’s aiPM technology™, which allows finite control over power domains. www.arrow.com

Grovety at tinyML Asia 2023

Grovety at tinyML Asia 2023 November 16, 2023 – At the tinyML Asia 2023 forum in South Korea (Seoul), Anthony Vasilev, Co-CEO of Grovety, a silver sponsor of the forum, showcased new Grovety developments – GRC AI module and GRC Dev Board for review.A self-learning engine doing Anomaly Detection and n-Class Classification in the time series data flow for providing Full turnkey AI functionality for integration in products of various complexity. AI Module is based on the ODL (On-Device Learning) Computing approach for providing and enhancing AI scenarios. (https://github.com/Grovety/grc_sdk/blob/main/docs/GRC_AI-module.md)The GRC Dev Board is a development kit and reference design that simplifies prototyping and testing of advanced industrial AIoT applications such as Anomaly Detection and n-Class Classification for predictive maintenance. (https://github.com/Grovety/grc_devboard/blob/main/docs/ GRC_DevBoard_Description.md)

tinyML Talk

Grovety at tinyML Talk in September 2023 If you missed one of the recent #tinyMLTalks webcast series: Minimizing Resource Usage in Microcontrollers for Cost-Effective Solutions with Ilya Gozman, Senior Fellow, Chief AI Architect at Grovety Inc., hosted by Evgeni Gousev from Qualcomm, we have got you covered! The video is now available on tinyML YouTube channel: https://www.youtube.com/watch?v=rBxpPesd2csWe illustrated how Apache TVM, an open source machine learning compiler, can significantly help reduce device cost and energy consumption. We also discussed our choice of Micro TVM and Arm Ethos-U55 on Alif E5 SoC, detailing their unique advantages and how they align with our goals, and revealing the results of our optimization efforts. Finally, we provided an exciting sneak peek into the future of our TinyML efforts, including upcoming products, our goal to further refine networks while maintaining accuracy, and our innovative strategies for ongoing optimization and development in the TinyML field.

Arm Tech Talk

Arm Tech Talk from Grovety: Saving wildlife by using AI-powered trap camera based on Arm Ethos-U55 You all may now that smart wildlife cameras are used today to preserve natural diversity. Grovety concerned about that and thus gave a talk on how smart wildlife camera traps with Al capabilities powered by the Arm Ethos-U55 are being used in conservation efforts to save the endangered Persian leopards. Here’s the link to the talk: https://www.youtube.com/watch?v=VK_LWwz4mdQ Throughout the presentation, we will cover challenges related to architecture, component selection, suitable neural networks search, and tool choices that were overcame to achieve results under a number of constraints. We look forward to sharing insights and our experience and hearing your thoughts in the comments. Let’s give the Arm ecosystem a head start to implement Al in embedded devices together!

TVM con 2023

Grovety at TVMcon 2023 In March 2023 we gave a short presentation at TVMcon – 2023. – Improvements to Ethos-U55 support in TVM including CI on Alif Semiconductor boards. Our Chief AI Architect gave an overview of the changes we’ve made to the Ethos-U55 integration in TVM, including new operators, new variants of supported operators, and other features. You can see more details at the dedicated TVMcon 2023 link: https://www.youtube.com/watch?v=4hHvXyH-tWQ&list=PL_4zDggB-DBp81G1tAME9r0_P5IY9D700&index=2%20

Generating relocatable code for ARM processors

By upgrading the LLVM compiler, we solved the problem when neither LLVM nor the GCC could create the correct Position Independent Code for Cortex M controllers, with the code running in Flash memory rather than in RAM. Now the binary image of the program can be flashed to an arbitrary address and run from it, without being moved to another place.

Tagged LLVM