GRC AI Add-on

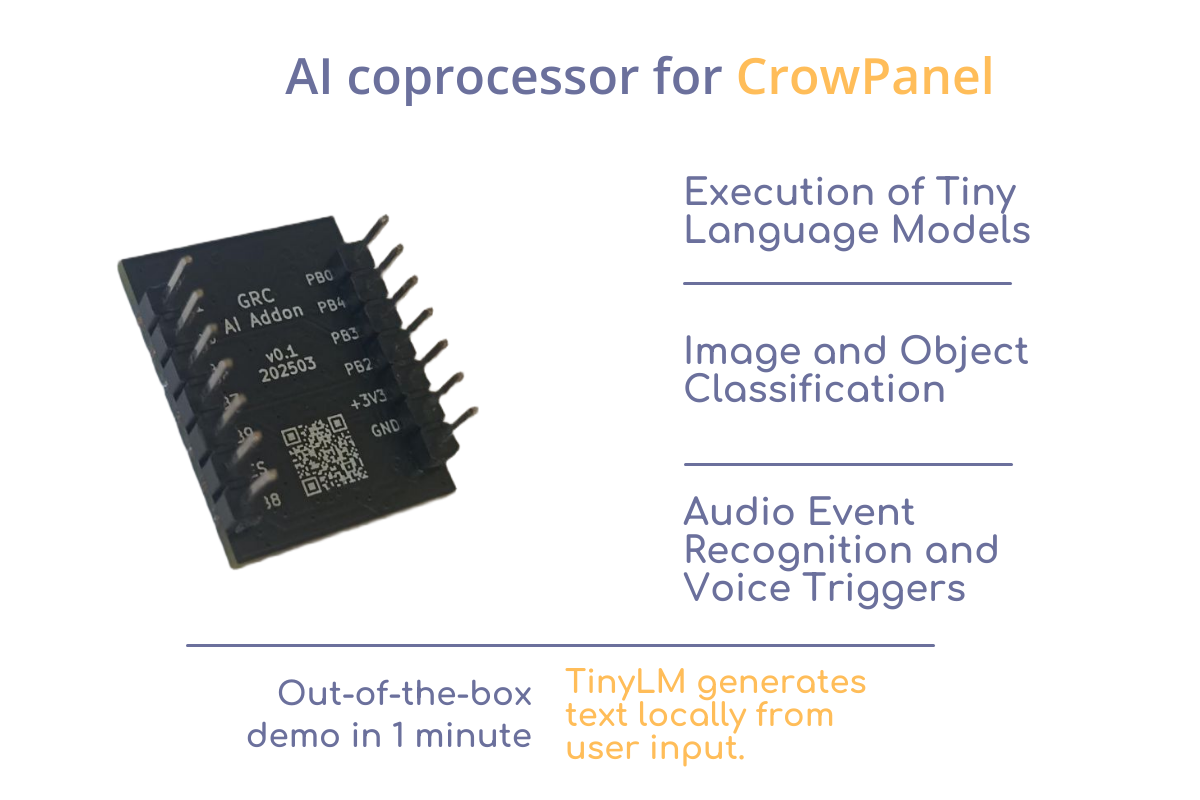

GRC AI Add-on for CrowPanel on HX6538 Run AI models on your CrowPanel — without cloud, complexity, or extra boards. Build interactive, intelligent devices that generate stories, classify inputs, or run TinyLM models — all offline and on-device. Just plug it in. GRC AI Add-on is a plug-in NPU module designed for CrowPanel, powered by the ultra-efficient Himax HX6538 microcontroller with Arm Cortex-M55 and Ethos-U55 NPU.It enables local neural network inference — perfect for applications like text generation, classification, and voice interfaces. Who It’s For Smart Device Developers→ Rapid prototyping and hypothesis testing with TinyML Schools, Makerspaces, Tech Hubs→ Practical demonstration of TinyLM/TinyML applications DIY Makers & AI Enthusiasts→ Build offline AI projects with Tiny Language Models (TinyStories, command assistants, etc.) Key Features Demo : TinyStories An interactive storytelling prototype based on the TinyStories Language Model. It showcases how compact hardware can be used for interactive natural language generation — all at the edge, with low power consumption and no internet required for model inference. The user types or choose a prompt on the CrowPanel touchscreen — for example, “Tell me a story about a princess.” The request is processed locally by a Tiny Language Model running on the GRC AI Add-on. Within seconds, a complete story is generated and displayed on the screen — with no internet connection or cloud services involved. To run the TinyStories demo, a CrowPanel Advance is required for input and display. Download the CrowPanel firmware here. The same link also provides the latest firmware for the Add-on and full documentation. Specs AI Core Microcontroller: Himax HX6538-A06TDFG CPU: Arm Cortex-M55 NPU: Arm Ethos-U55 SRAM: 2 MB ROM: 4 MB External Flash: 128 Mbit (16 MB) QSPI NOR Flash Form Factor & Pinout Add-on layout: 14-pin dual-row (7×2) header Pitch: 2.54 mm Board size: ~20×25 mm Power & Performance Power Supply: 3.3 V (regulated by CrowPanel) Typical Consumption: Idle: <1 mA Active inference: ~10–20 mA depending on model Designed for: battery- and solar-powered deployments Storage & Model Deployment Flash for models: 16 MB QSPI Flash Preloaded example: TinyStories (text-generation LLM) Model update: via firmware flashing or QSPI update tools Frameworks supported: CMSIS-NN, GRC SDK, TensorFlow Lite for Micro (adapted) Links https://github.com/Grovety/grc_ai_add-on

Tagged Air quality analysisArm Ethos-U55Robot control via OpenAI API