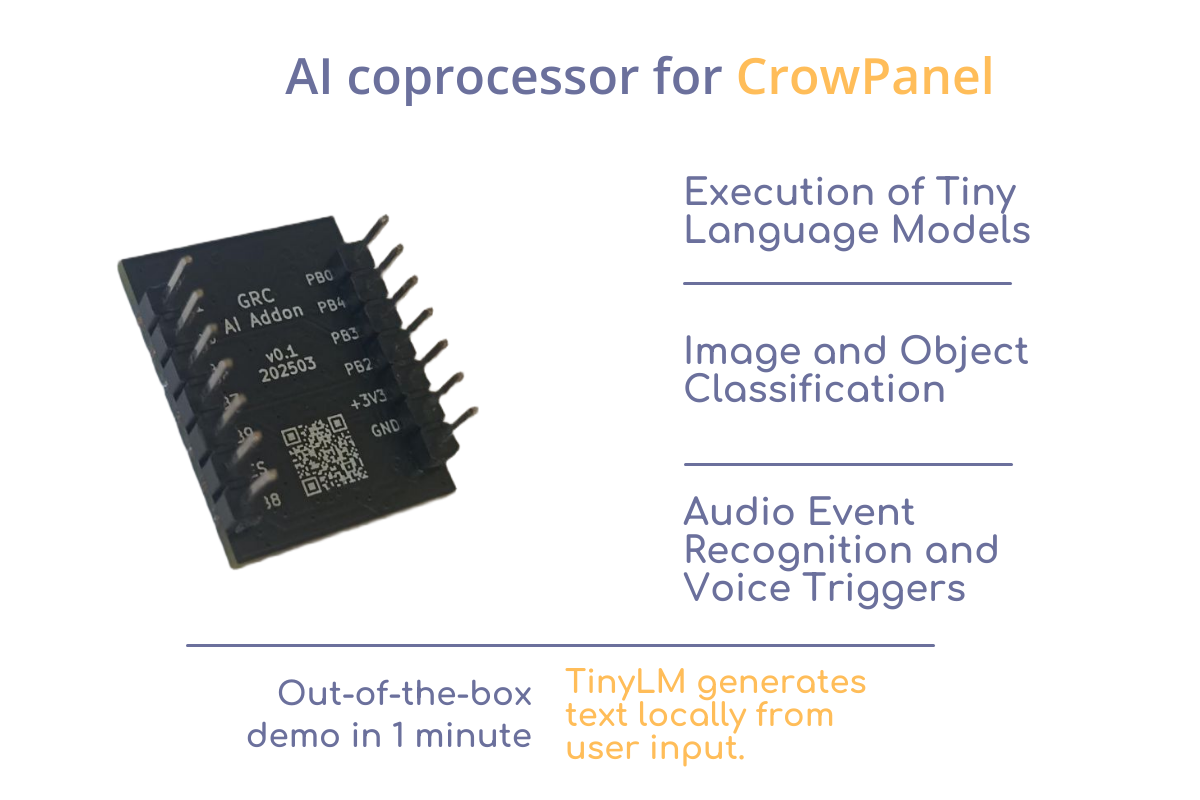

GRC AI Add-on

GRC AI Add-on for CrowPanel on HX6538 Run AI models on your CrowPanel — without cloud, complexity, or extra boards. Build interactive, intelligent devices that generate stories, classify inputs, or run TinyLM models — all offline and on-device. Just plug it in. GRC AI Add-on is a plug-in NPU module designed for CrowPanel, powered by the ultra-efficient Himax HX6538 microcontroller with Arm Cortex-M55 and Ethos-U55 NPU.It enables local neural network inference — perfect for applications like text generation, classification, and voice interfaces. Who It’s For Smart Device Developers→ Rapid prototyping and hypothesis testing with TinyML Schools, Makerspaces, Tech Hubs→ Practical demonstration of TinyLM/TinyML applications DIY Makers & AI Enthusiasts→ Build offline AI projects with Tiny Language Models (TinyStories, command assistants, etc.) Key Features Demo : TinyStories An interactive storytelling prototype based on the TinyStories Language Model. It showcases how compact hardware can be used for interactive natural language generation — all at the edge, with low power consumption and no internet required for model inference. The user types or choose a prompt on the CrowPanel touchscreen — for example, “Tell me a story about a princess.” The request is processed locally by a Tiny Language Model running on the GRC AI Add-on. Within seconds, a complete story is generated and displayed on the screen — with no internet connection or cloud services involved. To run the TinyStories demo, a CrowPanel Advance is required for input and display. Download the CrowPanel firmware here. The same link also provides the latest firmware for the Add-on and full documentation. Specs AI Core Microcontroller: Himax HX6538-A06TDFG CPU: Arm Cortex-M55 NPU: Arm Ethos-U55 SRAM: 2 MB ROM: 4 MB External Flash: 128 Mbit (16 MB) QSPI NOR Flash Form Factor & Pinout Add-on layout: 14-pin dual-row (7×2) header Pitch: 2.54 mm Board size: ~20×25 mm Power & Performance Power Supply: 3.3 V (regulated by CrowPanel) Typical Consumption: Idle: <1 mA Active inference: ~10–20 mA depending on model Designed for: battery- and solar-powered deployments Storage & Model Deployment Flash for models: 16 MB QSPI Flash Preloaded example: TinyStories (text-generation LLM) Model update: via firmware flashing or QSPI update tools Frameworks supported: CMSIS-NN, GRC SDK, TensorFlow Lite for Micro (adapted) Links https://github.com/Grovety/grc_ai_add-on

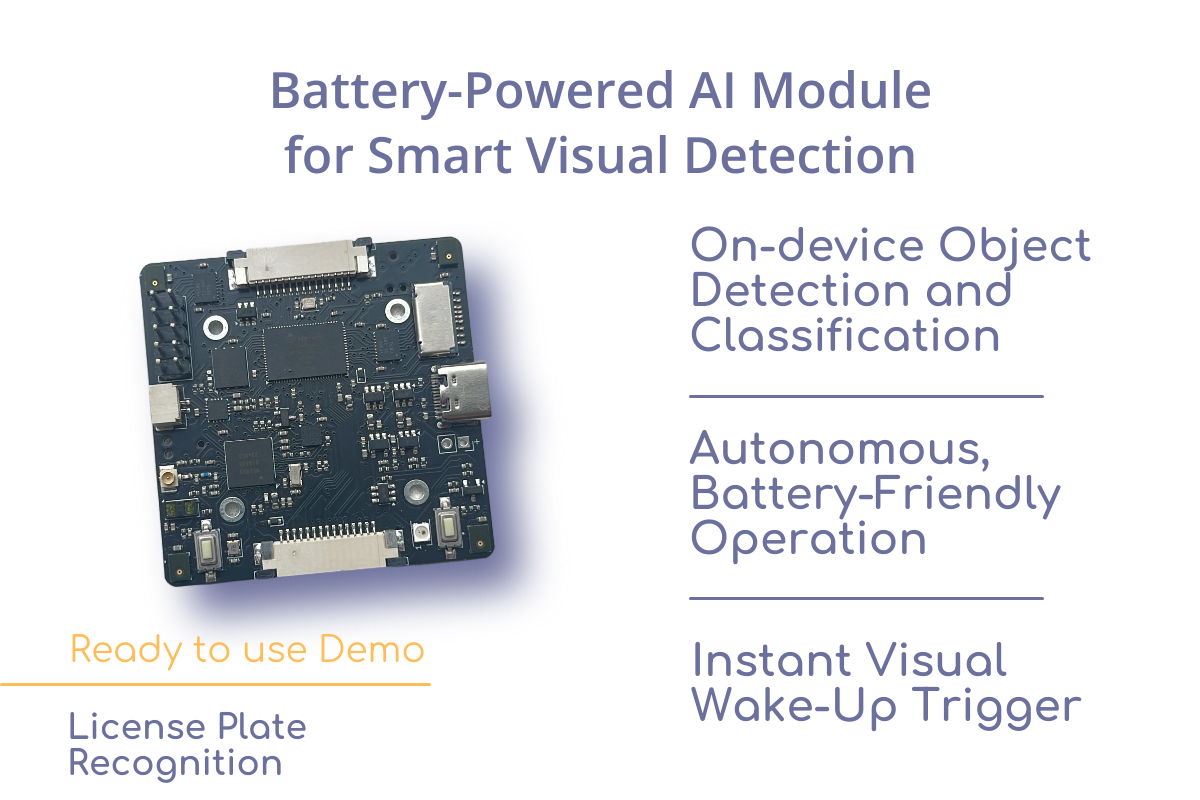

Powered Edge AI Module on Himax

Battery-Powered Edge AI Module on HX6538 Build smarter, energy-efficient devices with local AI that sees and reacts — without the cloud, without latency, and without constant power draw. Visual Wake Word: just like a smart speaker listens for a voice cue, this module “wakes up” when it sees a human, vehicle, animal or another oblect you choose. Autonomous operation, long battery life, and instant response — ideal for Smart Doorbells: Instantly detect a person at the door and trigger alerts — no cloud or Wi-Fi required. Retail People Counter: Low-power presence detection and classification to track footfall and store occupancy. Wireless Security Node: Battery-powered edge device with visual wake-up; sends notifications when a person or vehicle is detected. Access Control System: Local AI detects authorized personnel or license plates, triggering gate or door mechanisms. Smart Bird Feeder / Wildlife Monitor: Recognizes specific species and logs sightings autonomously in the field. Construction Site Monitoring: Detect unauthorized human presence or restricted zone entries in low-power mode. Ready-to-use Example Applications Gate — Standalone ANPR Module for Gate & Barrier A fully autonomous, battery-friendly module that opens your barrier or gate when it detects a recognized license plate. Wild — autonomous long-term AI camera trap Battery-powered AI camera trap for wildlife monitoring. Detects animal and bird species using onboard neural networks — no cloud or internet required. What can you do with it? With this module, you can: Run neural networks for object detection and classification (e.g., animals, vehicles, license plates); Capture and process audio and video directly on the device; Develop fully autonomous systems that run on batteries or rechargeable power; Integrate it as a drop-in component into your final product. Power Consumption and Battery Life Sleep Mode: Base current consumption: 316 µA Applies when all peripherals are disabled (deep sleep) Inference Mode (refers to detection & recognition networks): Active duration per inference: 130 ms + Himax startup time per inference 50 ms Current consumption during inference: ~30 mA Includes: activity of Himax HX6538 and Ethos-U55 NPU, including internal handling of camera and peripheral interfaces. External peripheral power consumption (camera, ToF sensor, mic, etc.) is not included. Battery Life Example: Periodic Inference (2× per minute) Assuming the device performs inference twice per minute, the average current is calculated as: Active time per minute: 0.36 s (2 × (130+50) ms) Sleep time per minute: 59.64 s Average current: With a 2500 mAh battery, expected runtime: This makes the platform ideal for long-term deployments in battery-powered applications with periodic AI inference, such as remote sensors, monitoring systems, etc.. Power Consumption Data Available When you purchase this module, we will also provide a detailed document outlining its power consumption in various operating modes — helping you accurately estimate energy usage in your final device. Fast time to market Use the module as a finished component — build your enclosure, add your model, and go to production. Open-source stack: Full firmware, Android app, and documentation available to accelerate development and customization. Ready-to-use platform: Includes camera interface, microphone array, uSD storage, ToF sensor, IMU, and Bluetooth — all pre-integrated. Free Support for Buyers Open-source projects for rapid product development An open-source object detection and classification project that you can customize for your specific use case Ready-to-Use Software Ecosystem The module comes with a complete open-source software stack, making it easy to start development and deploy real-world AI solutions without writing low-level code. Supported Frameworks and Toolchains: TensorFlow Lite Micro – for running quantized neural networks efficiently on MCUs CMSIS-NN – Arm-optimized neural network kernels Standard Arm toolchains – Compatible with Keil Studio, VSCode + PlatformIO Everything is Open-Source: Preloaded firmware, example models, and full API documentation Android demo app with source code Easy model swap (replace pre-trained network with your own) Example Projects & References: Visual Wake Word demo on Arm’s Corstone-320 MLEK:https://community.arm.com/arm-community-blogs/b/ml-ai/posts/ml-ek-vww Official Visual Wake Word dataset and benchmark:https://github.com/tensorflow/tensorflow/blob/master/tensorflow/lite/micro/examples/person_detection/README.md Himax SDK and NPU documentation:https://www.himax.com.tw/products/ai-sensors/ai-accelerators/ Free Support for Buyers When you purchase this module, you get free technical support and expert consultation to help you design and launch your own AI-powered solution. Whether you’re building a wildlife camera, smart gate, or custom embedded device — we’re here to help from PoC to production. End-to-End Development Workflow 1. Platform Evaluation You have a pre-configured dev kit (hardware + firmware + pre-trained model) Run out-of-the-box demo (e.g., animal detection) to test: Inference performance (FPS, accuracy) API integration (REST/gRPC/edge SDK) Hardware compatibility (CPU/GPU/NPU utilization) Review the API specification and model compatibility guidelines via the pre-built demo 2. Custom Model Integration Swap the default demo neural network for a task-specific model (e.g., ANPR for local license plates) via: DIY path: adapt existing models; White-glove service: Grovety delivers a custom model (optimized for target hardware). 3. Prototype to Production Modify the open-source board firmware to implement custom business logic; Enhance the mobile app with required features and UI/UX changes; Finalize the hardware: uses existing enclosure or develops custom hardware. Outcome: A ready-to-sell solution with low development costs and short time-to-market. Subsystems & Their Functions Under Himax control Image capture from camera module Audio acquisition from 4-microphone array Image processing using built-in hardware accelerators Image/audio processing using neural networks and Arm Ethos Data exchange with nRF52833 via SPI/I2C interfaces microSD card operations Under nRF52833 control Himax communication via SPI and/or I2C interfaces Host communication via Bluetooth and/or USB-UART interfaces Power management and device configuration (Himax, accelerometer, ToF sensor) Battery charging control and state monitoring LED control and button state reading Hardware Components List 1. Processing Units Main MCU: HiMax HX6538 – Handles primary tasks: Neural network acceleration (Ethos-U55 NPU) Camera interfacing (MIPI CSI-2) Microphone array processing (PDM/PCM) uSD card storage management Peripheral MCU: Nordic nRF52833 – Manages: Bluetooth 5.2 Low Energy connectivity Power management (battery/DC-in) Time-of-Flight (ToF) sensor data acquisition Inertial Measurement Unit (IMU) processing 2. Sensors & Peripherals Imaging: Camera-In (MIPI interface) – Primary vision input Camera-Out (Debug/auxiliary feed) Audio: Dual PDM microphones – Beamforming-capable Environmental: ToF sensor (VL53L5CX or equivalent) 6-axis IMU (Accel + Gyro) 3. Storage uSD card slot – For high-capacity logging (video/audio)

Tagged Arm Ethos-U55Development boardVoice-control for robot